If iRODS[1] is used to manage petabytes of data on high perfromance filesystem like Lustre[2]. You may want to set some specific parameters (like striping) before uploading the data set. This can usually be done by command-line tools, however, when you expect a high throughput of I/O calling os.system from python rule engine for every… Continue reading How to automate stripping setup on Lustre using iRODS for data ingest management.

Tag: hpc

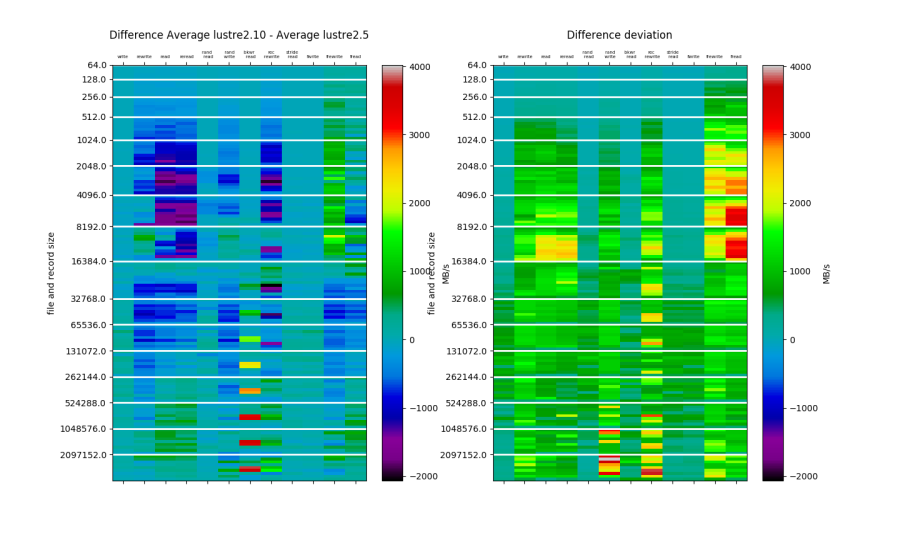

Analysing performance of shared file system on production cluster.

Shared filesystem performance analysis on the production cluster without maintenance window – lustre client 2.5 vs 2.10

Slurm in lightsail

In the previous post I’ve played with AWS lightsail – simple PaaS providing virtual machines. Having years of experience in administration of HPC systems I’ve thought about configuration of Slurm [1] cluster based on lightsail. Slurm cluster in AWS lightsail Automatic nodes provisioning is already available in Slurm [2], it’s even called “Elastic computing” which… Continue reading Slurm in lightsail